Experimenting with AI - is the Bot Accurate?

- emilyidleman

- Aug 2, 2025

- 2 min read

I recently used ChatGPT, a chatbot developed by OpenAI, designed to generate human-like text responses. I asked the chatbot to generate a response in 250-300 words. Why should or shouldn't government organizations use social media? What can they do about eliminating trolls or critics in their comment sections? The response was interesting, and honestly, reasonably accurate.

While I agree with why government organizations should use social media, I disagree with some of the ways in which an organization or elected official can combat critics or internet trolls.

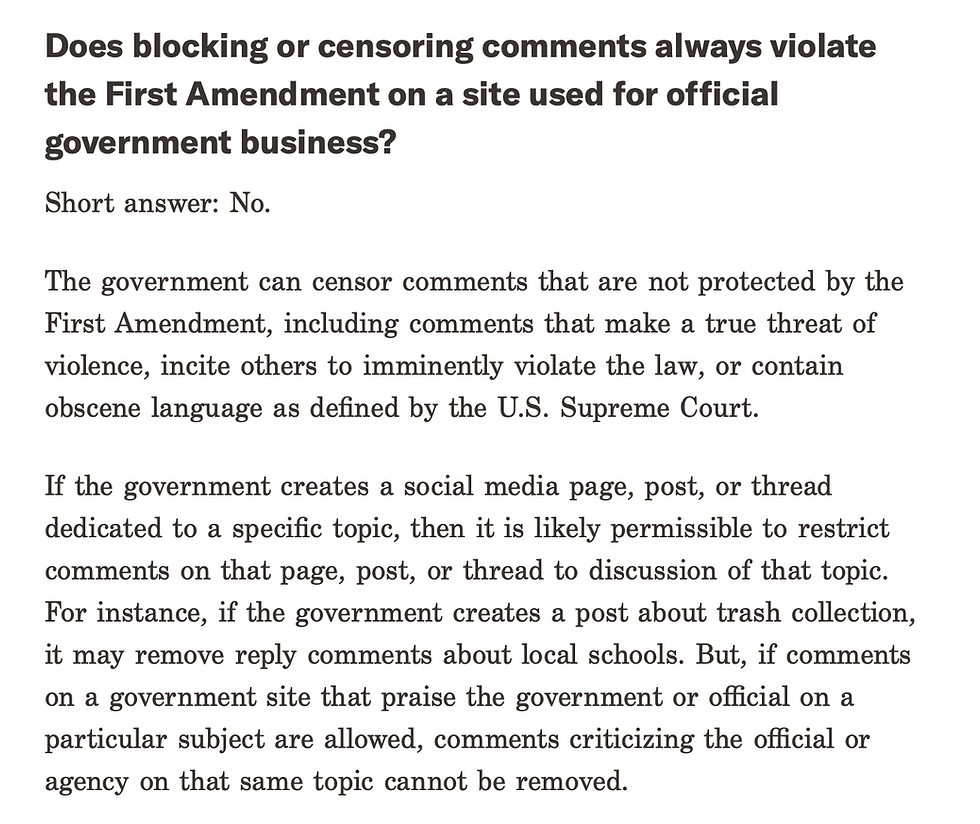

Federal laws only recently caught up with the use of social media by government organizations and elected officials, and even then, there is still some grey area. In the case of Lindke v. Freed, the Supreme Court ruled that when a social media page is acting in an official government capacity, it cannot delete or block users posting comments or sending messages that are critical, unpopular, or negative towards the official or agency. This ruling has effectively given agencies and officials some guidelines on official social media use.

The chatbot also mentioned another seemingly grey area - official guidelines. Organizations and officials can develop and publicly share social media policies that outline acceptable behavior, but only if those guidelines comply with the First Amendment. Users who post comments or send messages that violate the First Amendment, such as comments that make a threat of violence, hate speech, or obscene language, can be removed.

This exercise has affirmed views that I have previously held about AI. Check everything. Although the response was somewhat correct, it neglected to provide any information as to how a government organization or elected official can legally remove users from their platforms. I can't blame the chatbot entirely; it is a complicated issue with vague laws. In my own research, I found it difficult to find legally protected guidelines that organizations and officials can use to remove or block users.

So, is AI accurate? I certainly wouldn't use it as my main source, or for legal help. My advice to those looking for clarity - consult with your legal team.

Comments